Artificial intelligence engines powered by Large Language Models (LLMs) are becoming an increasingly accessible way of getting answers and advice, in spite of known racial and gender biases.

A new study has uncovered strong evidence that we can now add political bias to that list, further demonstrating the potential of the emerging technology to unwittingly and perhaps even nefariously influence society's values and attitudes.

The research was called out by computer scientist David Rozado, from Otago Polytechnic in New Zealand, and raises questions about how we might be influenced by the bots that we're relying on for information.

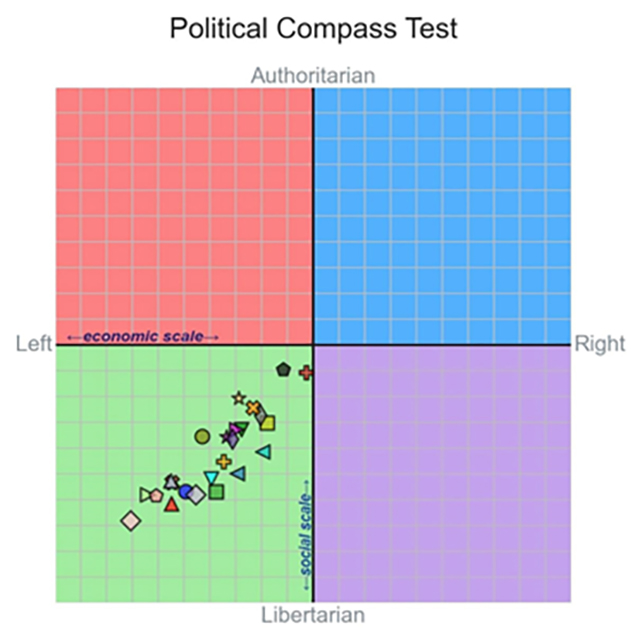

Rozado ran 11 standard political questionnaires such as The Political Compass test on 24 different LLMs, including ChatGPT from OpenAI and the Gemini chatbot developed by Google, and found that the average political stance across all the models wasn't close to neutral.

"Most existing LLMs display left-of-center political preferences when evaluated with a variety of political orientation tests," says Rozado.

The average left-leaning bias wasn't strong, but it was significant. Further tests on custom bots – where users can fine-tune the LLMs training data – showed that these AIs could be influenced to express political leanings using left-of-center or right-of-center texts.

Rozado also looked at foundation models like GPT-3.5, which the conversational chatbots are based on. There was no evidence of political bias here, though without the chatbot front-end it was difficult to collate the responses in a meaningful way.

With Google pushing AI answers for search results, and more of us turning to AI bots for information, the worry is that our thinking could be affected by the responses being returned to us.

"With LLMs beginning to partially displace traditional information sources like search engines and Wikipedia, the societal implications of political biases embedded in LLMs are substantial," writes Rozado in his published paper.

Quite how this bias is getting into the systems isn't clear, though there's no suggestion it's being deliberately planted by the LLM developers. These models are trained on vast amounts of online text, but an imbalance of left-learning over right-learning material in the mix could have an influence.

The dominance of ChatGPT training other models could also be a factor, Rozado says, because the bot has previously been shown to be left of center when it comes to its political perspective.

Bots based on LLMs are essentially using probabilities to figure out which word should follow another in their responses, which means they're regularly inaccurate in what they say even before different kinds of bias are considered.

Despite the eagerness of tech companies like Google, Microsoft, Apple, and Meta to push AI chatbots on us, perhaps it's time for us to reassess how we should be using this technology – and prioritize the areas where AI really can be useful.

"It is crucial to critically examine and address the potential political biases embedded in LLMs to ensure a balanced, fair, and accurate representation of information in their responses to user queries," writes Rozado.

The research has been published in PLOS ONE.